Use this quick start to get up and running with the Confluent Cloud Microsoft SQL

Server source connector. The quick start provides the basics of selecting the

connector and configuring it to obtain a snapshot of the existing data in a

Microsoft SQL Server database and then monitoring and recording all subsequent

row-level changes.

Using the Confluent Cloud GUI

Step 2: Add a connector.

Click Connectors. If you already have connectors in your cluster, click Add connector.

Step 3: Select your connector.

Click the Microsoft SQL Server Source connector icon.

Step 4: Set up the connection.

Complete the following and click Continue.

Note

- Make sure you have all your prerequisites completed.

- An asterisk ( * ) designates a required entry.

Enter a connector name.

Enter your Kafka Cluster credentials. The credentials are either the API key and secret or the service account API key and secret.

Enter the topic prefix for the database table name. You use this configuration to specify a Kafka topic (or topics), since this connector creates a topic (or topics) directly based on table names from your database.

Important

Database table names, topic names, and prefixes:

Before launching this connector, you must create topics in your Confluent Cloud

cluster that match your source database table names. For example, if you have

a database table named passengers, create a Kafka topic named

passengers beforehand. If you want to have a topic prefix, the name of

the topic or topics you create must also include the prefix.

You can use the following Confluent Cloud CLI command to create a topic name:

ccloud kafka topic create <prefix-><table-name>

For example:

ccloud kafka topic create list-passengers

Add the connection details for the database.

Important

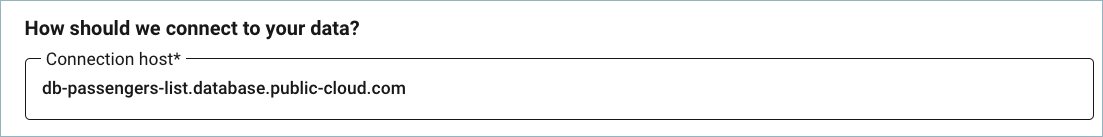

Do not include jdbc:xxxx:// in the Connection host field. The example below shows a sample host address.

Add the Database details for your database. Review the following notes for more information about field selections.

Enter a Timestamp column name to enable timesamp mode. This mode uses a timestamp (or timestamp-like) column to detect new and modified rows. This assumes the column is updated with each write, and that values are monotonically incrementing, but not necessarily unique.

Enter both a Timestamp column name and an Incrementing column name to enable timestamp+incrementing mode. This mode uses two columns, a timestamp column that detects new and modified rows, and a strictly incrementing column which provides a globally unique ID for updates so each row can be assigned a unique stream offset.

By default, the connector only detects tables with type TABLE from the source database. Use VIEW for virtual tables created from joining one or more tables. Use ALIAS for tables with a shortened or temporary name.

If you define a schema pattern in your database, you need to enter the Schema pattern in this field. For more information, search for schema.pattern in JDBC Connector Source Connector Configuration Properties.

Add the Connection details for your connection to the database.

Select an Output message format (data coming from the connector): AVRO, JSON_SR (JSON Schema), PROTOBUF, or JSON (schemaless). A valid schema must be available in Schema Registry to use a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

Enter the number of tasks in use by the connector. Refer to Confluent Cloud connector limitations for additional information.

Step 5: Launch the connector.

Verify the connection details and click Launch.

Step 6: Check the connector status.

The status for the connector should go from Provisioning to Running. It may take a few minutes.

Step 7: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

You can manage your full-service connector using the Confluent Cloud API. For details, see the Confluent Cloud API documentation.

For additional information about this connector, see JDBC Connector (Source and Sink) for Confluent Platform. Note

that not all Confluent Platform connector features are provided in the Confluent Cloud connector.

See also

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud ksqlDB, see the Cloud ETL Demo. This example also shows how to use Confluent Cloud CLI to manage your resources in Confluent Cloud.

Using the Confluent Cloud CLI

Complete the following steps to set up and run the connector using the Confluent Cloud CLI.

Important

Database table names, topic names, and prefixes:

Before launching this connector, you must create topics in your Confluent Cloud

cluster that match your source database table names. For example, if you have

a database table named passengers, create a Kafka topic named

passengers beforehand. If you want to have a topic prefix, the name of

the topic or topics you create must also include the prefix.

You can use the following Confluent Cloud CLI command to create a topic name:

ccloud kafka topic create <prefix-><table-name>

For example:

ccloud kafka topic create list-passengers

Step 1: List the available connectors.

Enter the following command to list available connectors:

ccloud connector-catalog list

Step 2: Show the required connector configuration properties.

Enter the following command to show the required connector properties:

ccloud connector-catalog describe <connector-catalog-name>

For example:

ccloud connector-catalog describe MicrosoftSqlServerSource

Example output:

Following are the required configs:

connector.class

name

kafka.api.key

kafka.api.secret

topic.prefix

connection.host

connection.port

connection.user

connection.password

db.name

table.whitelist

timestamp.column.name

output.data.format

tasks.max

Step 3: Create the connector configuration file.

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"name" : "confluent-microsoft-sql-source",

"connector.class": "MicrosoftSqlServerSource",

"kafka.api.key": "<my-kafka-api-key>",

"kafka.api.secret" : "<my-kafka-api-secret>",

"topic.prefix" : "microsoftsql_",

"connection.host" : "<my-database-endpoint>",

"connection.port" : "1433",

"connection.user" : "<database-username>",

"connection.password": "<database-password>",

"db.name": "ms-sql-test",

"table.whitelist": "passengers",

"timestamp.column.name": "created_at",

"output.data.format": "JSON",

"db.timezone": "UCT",

"tasks.max" : "1"

}

Note the following property definitions:

"topic.prefix": Used to create the topic name. The connector creates a topic or topics directly based on table names from your database. The Kafka topic name created is a combination of the topic prefix plus the table name."output.data.format": Sets the output message format (data coming from the connector). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf)."db.timezone": Identifies the database timezone. This can be any valid database timezone. The default is UTC. For more information, see this list of database timezones.

Step 4: Load the properties file and create the connector.

Enter the following command to load the configuration and start the connector:

ccloud connector create --config <file-name>.json

For example:

ccloud connector create --config microsoft-sql-source.json

Example output:

Created connector confluent-microsoft-sql-source lcc-ix4dl

Step 5: Check the connector status.

Enter the following command to check the connector status:

Example output:

ID | Name | Status | Type

+-----------+--------------------------------+---------+-------+

lcc-ix4dl | confluent-microsoft-sql-source | RUNNING | source

Step 6: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

You can manage your full-service connector using the Confluent Cloud API. For details, see the Confluent Cloud API documentation.

For additional information about this connector, see JDBC Connector (Source and Sink) for Confluent Platform. Note

that not all Confluent Platform connector features are provided in the Confluent Cloud connector.