PostgreSQL CDC Source Connector (Debezium) for Confluent Cloud

The Kafka Connect PostgreSQL Change Data Capture (CDC) Source connector (Debezium) for Confluent Cloud can

obtain a snapshot of the existing data in a PostgreSQL database and then monitor

and record all subsequent row-level changes to that data. The connector supports

Avro, JSON Schema, Protobuf, or JSON (schemaless) output data formats. All of

the events for each table are recorded in a separate Apache Kafka® topic. The events

can then be easily consumed by applications and services. Note that deleted

records are not captured.

Important

After this connector becomes generally available, Confluent Cloud Enterprise customers will need to

contact their Confluent Account Executive for more information about using

this connector.

Features

The PostgreSQL CDC Source connector (Debezium) provides the following features:

- Topics created automatically: The connector automatically creates Kafka topics using the naming convention:

<database.server.name>.<schemaName>.<tableName>. The tables are created with the properties: topic.creation.default.partitions=1 and topic.creation.default.replication.factor=3.

- Logical decoding plugins supported:

wal2json, wal2json_rds, wal2json_streaming, wal2json_rds_streaming, pgoutput, decoderbufs. The default used is pgoutput.

- Database authentication: Uses password authentication.

- Data Format with or without a Schema: The connector supports Avro, JSON Schema, Protobuf, JSON (schemaless), or Bytes. Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

- Select configuration properties:

- Tables included and Tables excluded: Allows you to set whether a table is or is not monitored for changes. By default, the connector monitors every non-system table.

- Snapshot mode: Allows you to specify the criteria for running a snapshot.

- Tombstones on delete: Allows you to configure whether a tombstone event should be generated after a delete event. Default is

true.

- Other configuration properties:

poll.interval.msmax.batch.sizemax.queue.size

You can manage your full-service connector using the Confluent Cloud API. For details, see the Confluent Cloud API documentation.

For more information, see the Confluent Cloud connector limitations.

Caution

Preview connectors are not currently supported and are not recommended for

production use.

Quick Start

Use this quick start to get up and running with the Confluent Cloud PostgreSQL CDC

Source (Debezium) connector. The quick start provides the basics of selecting

the connector and configuring it to obtain a snapshot of the existing data in a

PostgreSQL database and then monitoring and recording all subsequent row-level

changes.

- Prerequisites

Authorized access to a Confluent Cloud cluster on Amazon Web Services (AWS), Microsoft Azure (Azure), or Google Cloud Platform (GCP).

The Confluent Cloud CLI installed and configured for the cluster. See Install the Confluent Cloud CLI.

Schema Registry must be enabled to use a Schema Registry-based format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

You cannot use a basic database with Azure. You must use a general purpose or memory-optimized PostgreSQL database.

The PostgreSQL database must be configured for CDC. For details, see PostgreSQL in the Cloud.

Clients from Azure Virtual Networks are not allowed to access the server by default. Please make sure your Azure Virtual Network is correctly configured and enable “Allow access to Azure Services”.

Public access may be required for your database. See Internet Access to Resources for details. The following example shows the AWS Management Console when setting up a PostgreSQL database.

A parameter group with the property rds.logical_replication=1 is required. An example is shown below. Once created, you must reboot the database.

Public inbound traffic access (0.0.0.0/0) may be required for the VPC where the database is located, unless the environment is configured for VPC peering. See Internet Access to Resources for details. The following example shows the AWS Management Console when setting up security group rules for the VPC.

Note

See your specific cloud platform documentation for how to configure security rules for your VPC.

Kafka cluster credentials. You can use one of the following ways to get credentials:

- Create a Confluent Cloud API key and secret. To create a key and secret, go to Kafka API keys in your cluster or you can autogenerate the API key and secret directly in the UI when setting up the connector.

- Create a Confluent Cloud service account for the connector.

An ACL to create a topic prefix is required. Note that the prefix is the database server name (for example, if adding the server name cdc using the configuration property "database.server.name": "cdc"). See ccloud kafka acl create for the CLI command reference.

ccloud kafka acl create --allow --service-account "<service-account-id>" --operation "CREATE" --prefix --topic "<database.server.name>"

ccloud kafka acl create --allow --service-account "<service-account-id>" --operation "WRITE" --prefix --topic "<database.server.name>"

Using the Confluent Cloud GUI

Step 2: Add a connector.

Click Connectors. If you already have connectors in your cluster, click Add connector.

Step 3: Select your connector.

Click the PostgreSQL CDC Source connector icon.

Step 4: Set up the connection.

Complete the following and click Continue.

Note

- Make sure you have all your prerequisites completed.

- An asterisk ( * ) designates a required entry.

Enter a connector name.

Enter your Kafka Cluster credentials. The credentials are either the API key and secret or the service account API key and secret.

Add the connection details for the database.

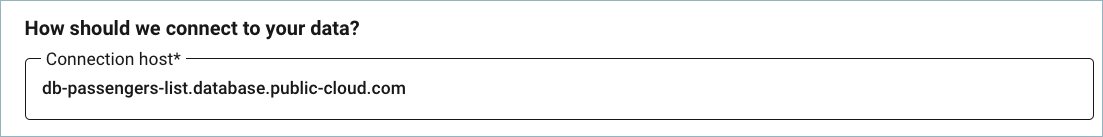

Important

Do not include jdbc:xxxx:// in the Connection host field. The example below shows a sample host address.

Add the Database details for your database. Review the following notes for more information about field selections.

- Tables included: Enter a comma-separated list of fully-qualified table identifiers for the connector to monitor. By default, the connector monitors all non-system tables. A fully-qualified table name is in the form

schemaName.tableName.

- Tables excluded: Enter a comma-separated list of fully-qualified table identifiers for the connector to ignore. A fully-qualified table name is in the form

schemaName.tableName. This property cannot be used with the property Tables included.

- Snapshot mode: Specifies the criteria for performing a database snapshot when the connector starts.

- The default setting is

initial. When selected, the connector takes a snapshot of the structure and data from captured tables. This is useful if you want the topics populated with a complete representation of captured table data when the connector starts.

never specifies that the connector should never perform snapshots, and that when starting for the first time, the connector starts reading from where it last left off.exported specifies that the database snapshot is based on the point in time when a replication slot was created. Note that this is a good way to perform a lock-free snapshot (see Snapshot isolation).

- Tombstones on delete: Configure whether a tombstone event should be generated after a delete event. The default is

true.

- Plugin name: Select the PostgreSQL logical decoding plugin to use. The default is

pgoutput.

Add the Connection details for your connection to the database.

Select the values for the following properties:

Output message format: (data coming from the connector): AVRO, JSON (schemaless), JSON_SR (JSON Schema), or PROTOBUF. A valid schema must be available in Schema Registry to use a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

After-state only: (Optional) Defaults to true, which results in the Kafka record having only the record state from change events applied. Select false to maintain the prior record states after applying the change events.

JSON output decimal format: (Optional) Defaults to BASE64.

Enter the number of tasks in use by the connector. Refer to Confluent Cloud connector limitations for additional information.

Step 5: Launch the connector.

Verify the connection details and click Launch.

Step 6: Check the connector status.

The status for the connector should go from Provisioning to Running. It may take a few minutes.

Step 7: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

You can manage your full-service connector using the Confluent Cloud API. For details, see the Confluent Cloud API documentation.

For additional information about this connector, see

Debezium PostgreSQL Source Connector for Confluent Platform. Note that not all Confluent Platform connector features are

provided in the Confluent Cloud connector.

See also

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud ksqlDB, see the Cloud ETL Demo. This example also shows how to use Confluent Cloud CLI to manage your resources in Confluent Cloud.

Using the Confluent Cloud CLI

Complete the following steps to set up and run the connector using the Confluent Cloud CLI.

Step 1: List the available connectors.

Enter the following command to list available connectors:

ccloud connector-catalog list

Step 2: Show the required connector configuration properties.

Enter the following command to show the required connector properties:

ccloud connector-catalog describe <connector-catalog-name>

For example:

ccloud connector-catalog describe PostgresCdcSource

Example output:

Following are the required configs:

connector.class: PostgresCdcSource

name

kafka.api.key

kafka.api.secret

database.hostname

database.user

database.dbname

database.server.name

output.data.format

tasks.max

Step 3: Create the connector configuration file.

Create a JSON file that contains the connector configuration properties. The following example shows the required connector properties.

{

"connector.class": "PostgresCdcSource",

"name": "PostgresCdcSourceConnector_0",

"kafka.api.key": "****************",

"kafka.api.secret": "****************************************************************",

"database.hostname": "debezium-1.<host-id>.us-east-2.rds.amazonaws.com",

"database.port": "5432",

"database.user": "postgres",

"database.password": "**************",

"database.dbname": "postgres",

"database.server.name": "cdc",

"table.whitelist":"public.passengers",

"plugin.name": "pgoutput",

"output.data.format": "JSON",

"tasks.max": "1"

}

Note the following property definitions:

"connector.class": Identifies the connector plugin name.

"name": Sets a name for your new connector.

"table.whitelist": (Optional) Enter a comma-separated list of fully-qualified table identifiers for the connector to monitor. By default, the connector monitors all non-system tables. A fully-qualified table name is in the form schemaName.tableName.

"plugin.name": (Optional) Sets the plugin to use. Options are wal2json, wal2json_rds, wal2json_streaming, wal2json_rds_streaming, pgoutput, and decoderbufs. The default is pgoutput.

"output.data.format": Sets the output message format (data coming from the connector). Valid entries are AVRO, JSON_SR, PROTOBUF, or JSON. You must have Confluent Cloud Schema Registry configured if using a schema-based message format (for example, Avro, JSON_SR (JSON Schema), or Protobuf).

"after.state.only": (Optional) Defaults to true, which results in the Kafka record having only the record state from change events applied. Enter false to maintain the prior record states after applying the change events.

"json.output.decimal.format": (Optional) Defaults to BASE64. Specify the JSON/JSON_SR serialization format for Connect DECIMAL logical type values with two allowed literals:

- BASE64 to serialize DECIMAL logical types as base64 encoded binary data.

- NUMERIC to serialize Connect DECIMAL logical type values in JSON or JSON_SR as a number representing the decimal value.

"tasks.max": Enter the number of tasks in use by the connector. Refer to Confluent Cloud connector limitations for additional information.

"snapshot.mode": Specifies the criteria for performing a database snapshot when the connector starts.

- The default setting is

initial. When selected, the connector takes a snapshot of the structure and data from captured tables. This is useful if you want the topics populated with a complete representation of captured table data when the connector starts.

never specifies that the connector should never perform snapshots, and that when starting for the first time, the connector starts reading from where it last left off.exported specifies that the database snapshot is based on the point in time when a replication slot was created. Note that this is a good way to perform a lock-free snapshot (see Snapshot isolation).

Configuration properties that are not listed use the default values. For

default values and property definitions, see PostgreSQL Source Connector (Debezium) Configuration Properties.

Step 4: Load the properties file and create the connector.

Enter the following command to load the configuration and start the connector:

ccloud connector create --config <file-name>.json

For example:

ccloud connector create --config postgresql-cdc-source.json

Example output:

Created connector PostgresCdcSourceConnector_0 lcc-ix4dl

Step 5: Check the connector status.

Enter the following command to check the connector status:

Example output:

ID | Name | Status | Type

+-----------+------------------------------+---------+-------+

lcc-ix4dl | PostgresCdcSourceConnector_0 | RUNNING | source

Step 6: Check the Kafka topic.

After the connector is running, verify that messages are populating your Kafka topic.

You can manage your full-service connector using the Confluent Cloud API. For details, see the Confluent Cloud API documentation.

For additional information about this connector, see

Debezium PostgreSQL Source Connector for Confluent Platform. Note that not all Confluent Platform connector features are

provided in the Confluent Cloud connector.

Next Steps

See also

For an example that shows fully-managed Confluent Cloud connectors in action with Confluent Cloud ksqlDB, see the Cloud ETL Demo. This example also shows how to use Confluent Cloud CLI to manage your resources in Confluent Cloud.